Machine Learning in Power Grid Load Management

Share

Machine learning is reshaping how power grids manage electricity demand and supply. By processing large datasets quickly, it helps grid operators make better decisions, prevent outages, and integrate renewable energy sources more effectively. Traditional methods struggle with the complexity of modern grids, but machine learning offers tools to address these challenges.

Key highlights from the article:

- Applications: Machine learning improves load forecasting, real-time optimization, predictive maintenance, and renewable energy integration.

- Techniques: Algorithms like Support Vector Regression (SVR), Long Short-Term Memory (LSTM) networks, and Reinforcement Learning (RL) tackle specific grid challenges.

- Benefits: Reduced costs, improved reliability, and better handling of renewable energy variability.

- Challenges: Limited data quality, integration with older systems, and scalability concerns.

Machine learning is already proving its value in real-world scenarios, like decentralized load shedding models and renewable energy forecasting. These advancements are helping utilities balance demand and supply while reducing operational costs and emissions.

AI-Assisted Power Grid Dispatch and Control | Di Shi | Smart Grid Seminar

sbb-itb-501186b

Machine Learning Algorithms for Load Balancing

Machine Learning Algorithms for Power Grid Load Management: SVR vs LSTM vs Reinforcement Learning Comparison

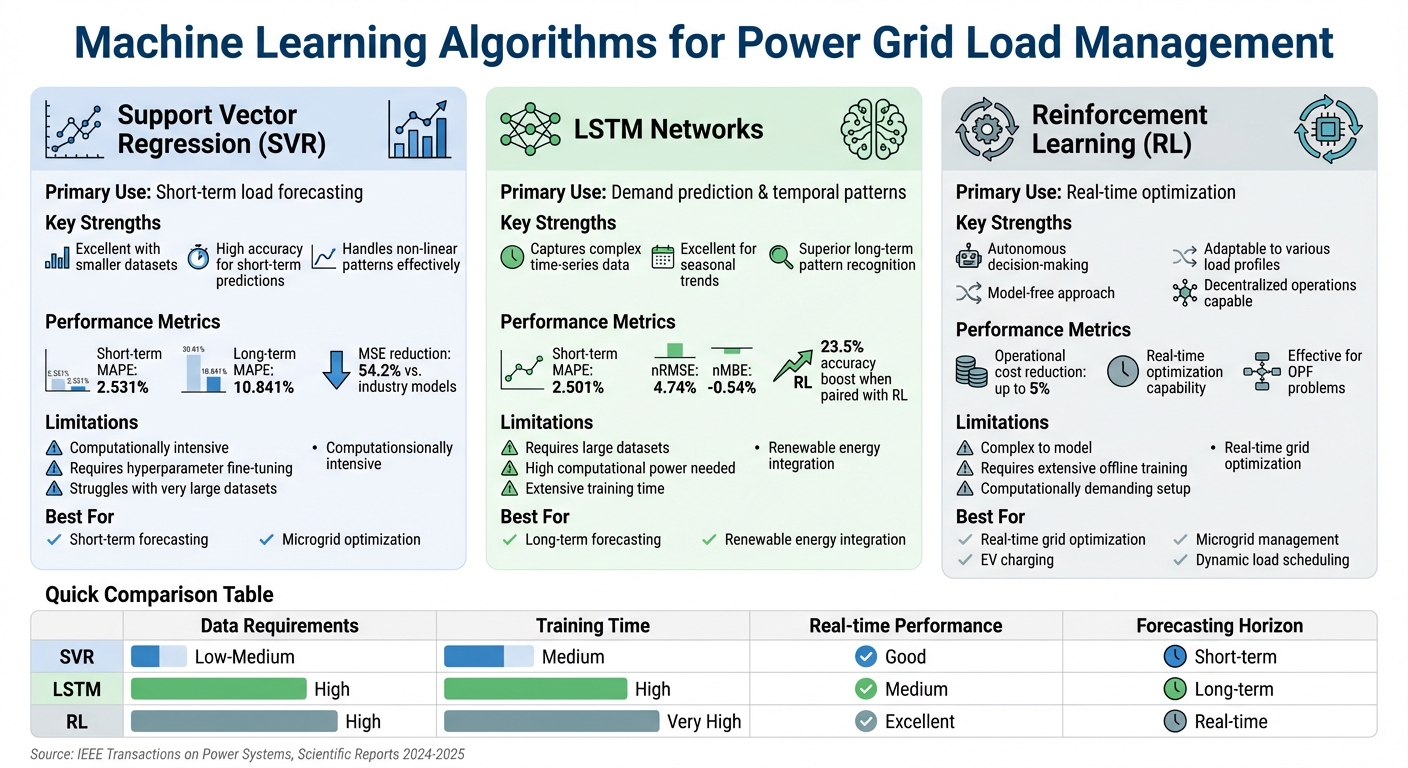

Machine learning is proving to be a game-changer in grid control, especially when it comes to load balancing. Among the many algorithms tested, three have become key players: Support Vector Regression (SVR), Long Short-Term Memory (LSTM) networks, and Reinforcement Learning (RL). Each of these offers unique advantages for tackling the challenges of unpredictability and real-time adjustments in power grid management.

Support Vector Regression (SVR) for Load Forecasting

SVR is a powerful tool for short-term load forecasting, especially when working with smaller datasets. By mapping complex consumption patterns into a high-dimensional space, it achieves impressive accuracy levels. For instance, SVR's short-term forecasting accuracy is comparable to LSTM (Mean Absolute Percentage Error: 2.531% vs. 2.501%) but significantly outperforms LSTM in long-term predictions (10.841% vs. 69.947% MAPE). Moreover, an advanced SVR framework has shown to reduce Mean Squared Error (MSE) by 54.2% compared to industry persistence models.

However, SVR's success relies heavily on fine-tuning its hyperparameters. This is particularly true when using Radial Basis Function (RBF) kernels, which are essential for capturing non-linear relationships in the data. While SVR is highly effective, its focus is more on short-term accuracy rather than capturing complex temporal patterns.

LSTM Networks for Demand Prediction

When it comes to understanding temporal patterns and seasonal trends, LSTM networks excel. These networks are designed to handle time-series data with complex dependencies, making them ideal for ultra-short and short-term forecasting. For example, in tests involving building-level electricity consumption, LSTM achieved a normalized Root Mean Square Error (nRMSE) of 4.74% and a normalized Mean Bias Error (nMBE) of -0.54%. Another study reported nMBE as low as -0.02% and nRMSE at 2.76%.

LSTMs are particularly powerful when paired with Reinforcement Learning, with studies showing a 23.5% boost in prediction accuracy in scenarios with high monthly electricity consumption variations. However, this level of sophistication comes at a cost. LSTMs demand large datasets and significant computational power to train effectively, making them more suitable for utilities with advanced data infrastructures.

Reinforcement Learning for Real-Time Optimization

Reinforcement Learning (RL) takes a different approach by focusing on real-time optimization. RL agents learn to make decisions autonomously, maximizing rewards while balancing cost-efficiency and reliability. This approach is especially effective for solving the Optimal Power Flow (OPF) problem, which traditional methods struggle to handle in real-time due to computational complexity. RL agents can be trained offline and then applied directly to various load profiles, making them highly adaptable.

The practical benefits of RL are substantial. For example, integrating RL-based forecasts into power system optimization frameworks can reduce operational costs by up to 5% through more efficient unit commitment and dispatch decisions. Applications include managing microgrids (balancing solar PV, wind, and energy storage), optimizing electric vehicle charging, and scheduling dynamic loads. Additionally, Multi-Agent Reinforcement Learning (MARL) allows decentralized power units to collaborate autonomously, offering enhanced flexibility for grid management.

Machine Learning Applications in Power Grids

Machine learning is transforming power grid operations by improving predictive maintenance, handling the variability of renewable energy, and fine-tuning local power networks for better performance.

Predictive Maintenance and Fault Detection

Did you know that equipment failures - mainly caused by insulation degradation and partial discharge - are responsible for over 70% of electrical grid outages? A striking example is the 2003 transformer failure in London, which, paired with a faulty relay, caused an energy loss estimated at £6–9 million. Traditional maintenance methods, like periodic helicopter-based thermal imaging, often miss early warning signs, leaving systems vulnerable. Machine learning offers a smarter solution. By analyzing sensor data continuously through models like Support Vector Machines (SVM), Artificial Neural Networks (ANN), and Decision Trees, utilities can detect partial discharge activity and classify fault types with precision. This approach shifts maintenance schedules from fixed intervals to condition-based strategies, cutting both downtime and costs.

Load Forecasting with Renewable Energy Integration

Machine learning also excels in managing the challenges posed by renewable energy sources like solar and wind, which are naturally unpredictable. Balancing supply and demand in real time requires accurate forecasting, and this is where machine learning shines. Support Vector Regression (SVR) models analyze historical production and weather data to predict solar and wind output with impressive accuracy - achieving a mean squared error (MSE) of 2.002 for solar power and 3.059 for wind energy. The results? An 8.4% reduction in operating costs, a 10% improvement in supply-demand balance, and a 15% drop in peak load. These advancements have also boosted renewable energy utilization by 12%, a critical gain as global renewable capacity is expected to grow by 60% by 2026, with solar and wind dominating new installations.

"Machine Learning (ML) methods can often model complex and non-linear data better than the statistical models." - IEEE Access

Microgrid Energy Management Systems (MGMS)

On a more localized scale, machine learning is revolutionizing microgrid operations. Microgrids demand precise coordination of energy generation, storage, and consumption. In October 2020, researchers introduced a machine learning–driven energy management model for three energy districts in Texas. Two districts (ED1 and ED2) at Copano Bay used wind turbines, while Brownsville's ED3 relied on solar PV arrays. By combining Gaussian Process Regression (GPR) with genetic algorithms, the system optimized energy surplus and grid revenue, outperforming traditional methods through adaptive service agreements. Machine learning also enables microgrids to make quick decisions - like when to draw power from solar panels, discharge batteries, or tap into the main grid - ensuring stability even during fluctuations in renewable energy generation. With the global microgrid market expected to grow from $8.1 billion in 2019 to nearly $40 billion by 2028, machine learning is becoming a cornerstone of modern power grid systems.

Performance Metrics and Benefits

When choosing a machine learning algorithm for grid load management, the decision must lead to measurable improvements in cost efficiency and reliability. Even a small 1% increase in load forecast error can cost utilities an additional $10 million in operational expenses. This highlights the importance of understanding algorithm performance, as these choices directly affect financial outcomes and grid stability.

Machine learning goes beyond just improving forecasting accuracy. It also reduces costs, minimizes power losses, and enhances resource allocation. These benefits give grid operators the tools they need to maintain secure and efficient operations.

"The main idea of machine learning methods is to transfer the computational overhead from online optimization to offline training processes by utilizing extensive historical data."

- H. Khaloie, M. Dolányi, J.-F. Toubeau, F. Vallée

Utilities evaluate algorithm performance using metrics like Mean Absolute Percentage Error (MAPE) for forecasting accuracy, training and testing accuracy for reliability, and real-time inference speed for operational needs. For instance, Enhanced Decision Tree Classifiers have achieved 99.70% testing accuracy in short-term forecasts using data from the New York Independent System Operator. Consistent performance over time, or forecasting stability, is equally critical for ensuring safe and reliable grid operations.

Algorithm Comparison: SVR, LSTM, and Reinforcement Learning

Different machine learning approaches cater to specific challenges in grid load management. Here's a breakdown of their strengths and limitations:

| Algorithm | Advantages | Disadvantages | Key Metrics |

|---|---|---|---|

| Support Vector Regression (SVR) | Great for short-term forecasting and handling non-linear data. | Computationally intensive and struggles with very large datasets. | MAPE; training and testing accuracy |

| LSTM Networks | Excellent at capturing time-series data and long-term patterns. | Requires extensive historical data and high processing power. | Prediction error; temporal accuracy |

| Reinforcement Learning | Optimizes real-time operations and demand-response without needing explicit system models. | Complex to model and requires extensive offline training. | Cumulative reward; grid stability; operational cost impact |

SVR is particularly effective for short-term forecasting and identifying potential system failures. Its ability to work with non-linear data makes it valuable, but its computational demands can be a challenge for large datasets.

LSTM networks excel in capturing complex temporal patterns over extended periods. While they demand significant historical data and computational resources, their ability to tackle challenges like renewable energy intermittency and fluctuating consumption patterns makes them indispensable for long-term load predictions.

Reinforcement Learning focuses on real-time optimization and autonomous decision-making. Its model-free approach eliminates the need for explicit grid models, making it well-suited for decentralized operations. Decentralized multi-agent RL systems, in particular, can outperform centralized models when managing large-scale grid complexities.

"The control based on reinforcement learning (RL) of solving power management problems in advanced power distribution systems is the most convenient alternative modern choice."

- Mudhafar Al-Saadi, Maher Al-Greer, and Michael Short

Each of these approaches offers distinct advantages, whether the goal is forecasting accuracy, understanding long-term patterns, or optimizing real-time operations. By aligning algorithm capabilities with operational needs, utilities can make smarter decisions for effective grid management.

Implementation Challenges and Solutions

Deploying machine learning (ML) for grid load management comes with its fair share of hurdles. Moving from theoretical concepts to practical application means tackling technical and operational obstacles like limited data, compatibility issues with existing systems, and financial constraints - all of which can slow down even the most advanced algorithms.

Data Availability and Quality

The lack of high-quality data is one of the biggest roadblocks to adopting ML in power grids. Many current grid instruments - such as Advanced Metering Infrastructure (AMI) and Distribution-level Phasor Measurement Units (D-PMUs) - fall short in providing the consistent, synchronized data that ML models need to function effectively at scale.

"The primary challenge preventing wide scale adoption of AI/ML methods in planning and operations has been consistent access to high-quality data across the distribution grid."

Several factors make data collection difficult, including inconsistent standards, outdated communication systems, and the unpredictable nature of renewable energy sources. Solutions to these challenges include adopting Continuous Point On Wave (CPOW) data collection for better synchronization, implementing universal data protocols and Internet of Energy (IoE) standards, and upgrading communication infrastructure with edge computing to lower bandwidth requirements. These steps are critical to ensuring ML models can be effectively integrated into grid systems.

Integration with Existing Infrastructure

Integrating ML into legacy grid systems without causing disruptions requires careful planning. Traditional grids weren’t built with advanced technologies like AI in mind, making retrofitting a complex process. Yet, this integration is essential for deploying advanced load management solutions like Support Vector Regression (SVR), Long Short-Term Memory (LSTM) networks, and reinforcement learning algorithms.

Decentralized architectures provide a practical way forward. For example, in January 2025, researchers Yuqi Zhou and Hao Zhu from the National Renewable Energy Lab (NREL) and the University of Texas at Austin developed a decentralized ML design for Optimal Load Shedding. Tested on a synthetic Texas 2,000-bus system, the design allowed individual load centers to use local data to respond autonomously to emergencies, reducing the need for system-wide, real-time communication.

"The incorporation of advanced human–machine interfaces is essential, as it enables humans to validate the effectiveness of AI/ML solutions while remaining actively engaged, fostering trust in AI/ML deployment."

- Yousu Chen, Pacific Northwest National Laboratory

Physics-informed neural networks (PINNs) help ensure that ML outputs respect the physical constraints of power grids, avoiding recommendations that could lead to technical issues. Meanwhile, offline training using historical and simulated data allows ML models to respond almost instantly during real-time operations, bypassing the delays of live training. Human–machine interfaces add an extra layer of safety, enabling operators to review and validate ML-driven decisions before implementation.

Scalability and Cost Concerns

Scaling ML solutions for large grid systems brings its own set of challenges. The computational and financial demands of managing grids with tens of thousands of buses and resources can grow exponentially, creating significant limitations.

To address these issues, cost-effective strategies focus on improving computational efficiency. For instance, in July 2024, researchers from NREL and the University of Texas at Austin developed an ML algorithm for fairness-aware load shedding. Tested on the RTS-GMLC system, the algorithm achieved millisecond-level computation by pinpointing key constraints, balancing economic and fairness considerations in real time. Learning-augmented solvers have also shown dramatic improvements, reducing the primal-dual gap by up to 10,000 times within specific time limits compared to traditional methods.

| Strategy | Primary Benefit | Cost Impact |

|---|---|---|

| Decentralized Design | Reduces reliance on system-wide communication | Lowers communication hardware costs |

| Offline Training | Shifts computational burden from real-time operations | Reduces operational computing costs |

| Warm-Start Optimization | Speeds up convergence of existing solvers | Extends the life of current infrastructure |

| Local Measurements | Leverages existing sensor data at each bus | Avoids system-wide data synchronization |

Industry Deployment Case Studies

Practical implementations highlight how machine learning (ML) methods tackle previously discussed challenges, delivering measurable gains in efficiency, cost reduction, and system reliability.

GridSense Platform for Load Balancing

In January 2025, researchers Yuqi Zhou from the National Renewable Energy Lab (NREL) and Hao Zhu from the University of Texas at Austin introduced a decentralized neural network model for Optimal Load Shedding (OLS). This system was tested on the IEEE 118-bus system and a synthetic Texas 2,000-bus system, simulating large-scale grid operations across Texas.

The model allowed local load centers to independently calculate load-shedding solutions, cutting down on computation and communication demands during critical moments.

"Our learning-for-OLS approach can greatly reduce the computation and communication needs during online emergency responses, thus preventing the cascading propagation of contingencies for enhanced power grid resilience."

- Yuqi Zhou and Hao Zhu, IEEE Transactions on Power Systems

This decentralized approach proved especially effective during emergencies, where rapid responses are crucial to prevent cascading blackouts. By enabling local decision-making instead of relying on a centralized system, the platform responded more quickly to grid disruptions while maintaining balanced load distribution. This case study showcases how ML algorithms can strengthen grid stability and resilience.

In addition to these findings, other research has shown how Support Vector Regression (SVR) enhances microgrid operations.

SVR in Microgrids with Solar PV and Wind

ML also plays a critical role in optimizing smaller-scale microgrids. A study published in Scientific Reports (2024–2025) demonstrated how Support Vector Regression (SVR) effectively manages microgrids powered by solar photovoltaic (PV) panels and wind turbines. The team used SVR algorithms to forecast energy production by analyzing historical data and real-time weather inputs.

The results were notable: operating costs dropped by 8.4%, renewable energy utilization increased by 12%, and peak load demand was reduced by 15%. This led to more efficient supply-demand matching throughout the day.

SVR forecasting achieved a Mean Squared Error (MSE) of 2.002 for solar and 3.059 for wind, with Root Mean Squared Error (RMSE) values of 1.415 for solar and 1.749 for wind, significantly outperforming traditional linear regression models.

"The application of our SVR model resulted in an 8.4% reduction in overall operating costs, highlighting its effectiveness in improving energy management efficiency."

- Scientific Reports

These advancements provided direct financial benefits for grid operators while improving system reliability. More accurate forecasts allowed for better scheduling of backup resources and reduced the need for costly peak-time energy purchases. This case study further illustrates how ML algorithms enhance energy management and grid stability.

Conclusion

Machine learning is transforming grid management by boosting efficiency, improving reliability, and cutting costs. It addresses critical challenges like the unpredictability of renewable energy sources and the high computational demands of real-time system optimization.

The impact of these advancements is evident in measurable performance improvements. For instance, machine learning models now achieve 98.05% accuracy in forecasting electricity consumption. Neural networks outperform traditional methods, solving complex optimal power flow problems 14 to 22 times faster than conventional mathematical solvers. In a study involving a 1,300-bus system, machine learning models maintained a cost error of only 1%, compared to the 2% error typical of standard DC OPF models. This efficiency has translated to real-world benefits, with grid operators reporting a 1.4% reduction in operational costs and up to 28% lower CO₂ emissions in specific scenarios.

Beyond operational improvements, machine learning strengthens grid resilience through automated anomaly detection and decentralized emergency response. For example, a sudden 800 MW drop in solar power - enough to supply 500,000 homes - can occur due to passing cloud cover. Decentralized neural network designs allow individual load centers to make autonomous decisions during such emergencies, preventing cascading blackouts without overloading communication systems.

For grid operators navigating the shift to renewable energy, machine learning provides the computational power needed to manage large-scale intermittency. It ensures accurate demand forecasting, enables dynamic, real-time power distribution, and supports seamless integration of renewable sources. As power systems move toward decentralized and renewable-heavy configurations, machine learning will continue to play a critical role in maintaining the delicate balance of supply, demand, and overall system stability.

To explore more advanced solutions and insights into power generation equipment, visit Electrical Trader.

FAQs

When should I use SVR vs. LSTM for load forecasting?

When dealing with high-resolution load data and aiming to predict over longer timeframes, LSTM stands out. It excels at identifying long-term patterns and handling complex temporal dependencies, making it ideal for extended forecasting horizons. On the other hand, SVR is a strong choice for short-term predictions, particularly when the data is simpler and training time is limited.

For the best outcomes, consider using hybrid models that combine the strengths of both approaches. This blend can improve accuracy and provide a more reliable solution for managing power grid loads.

How do RL models stay safe with real grid constraints?

Reinforcement Learning (RL) models play a key role in maintaining safety in power grids by employing safe RL strategies. These strategies integrate predefined safety constraints directly into both the training process and operational phases. By using techniques such as constrained optimization and barrier functions, RL models effectively prevent unsafe actions. Additionally, they are designed to adhere to grid limitations - like voltage, current, and load thresholds - ensuring the grid remains stable and avoids unsafe conditions, which are essential for reliable management.

What data do utilities need to deploy ML in legacy grids?

Utilities rely on real-time data from smart meters and sensors to monitor energy consumption, voltage, current, and equipment status. For fault detection and system monitoring, high-quality waveform data - such as Continuous Point On Wave (CPOW) - is especially critical. Other important data includes fault records, equipment failure reports, and operational parameters.

To achieve accurate and reliable data, utilities often need to upgrade sensors and systems. These improvements support better modeling, predictive maintenance, and load balancing, even in older grid infrastructures.

Related Blog Posts

- Energy Losses from Poor Load Balancing: Study Insights

- How AI Optimizes Power Generation Systems

- Impact of Load Changes on Voltage Stability

- Study: Energy Savings with Voltage Regulation