Study: Energy Savings with Voltage Regulation

Share

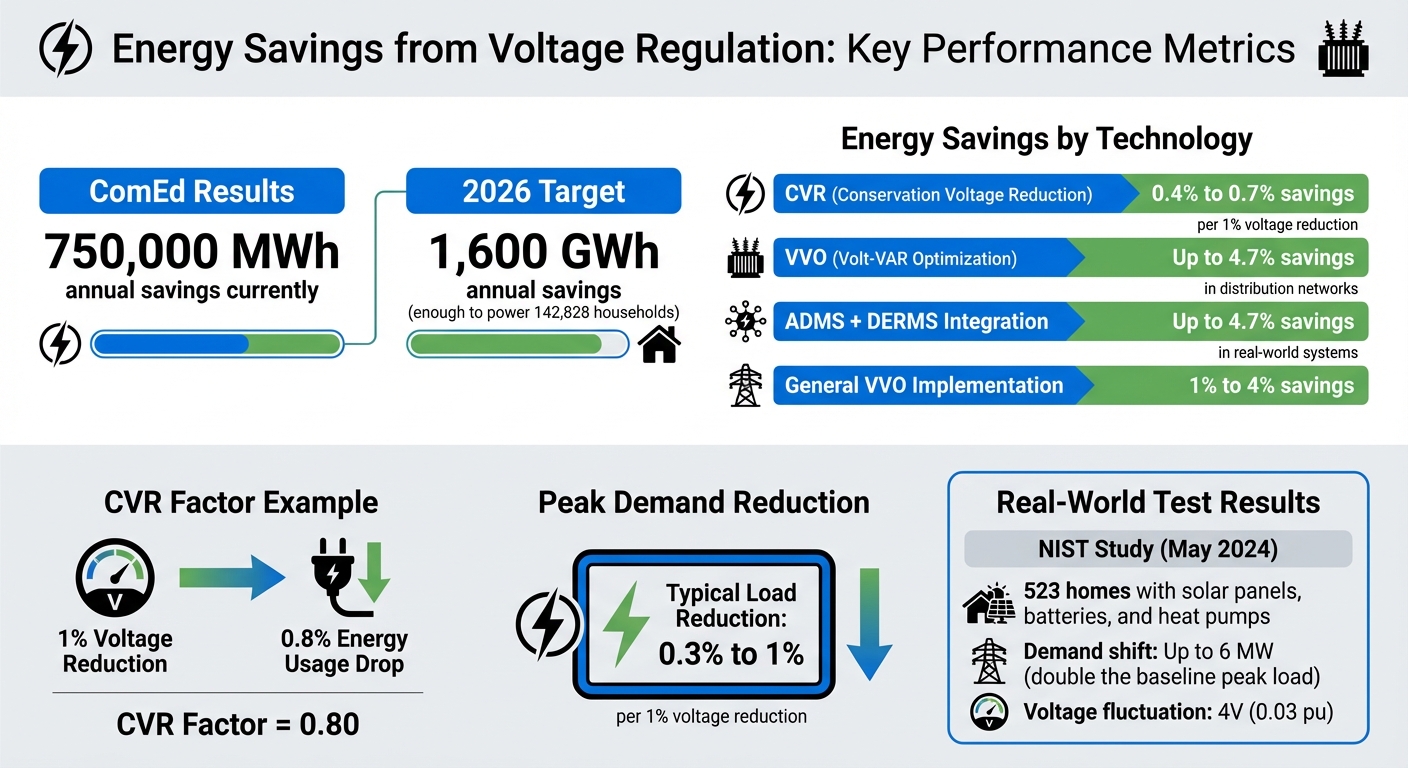

Voltage regulation technologies, like Conservation Voltage Reduction (CVR) and Volt-VAR Optimization (VVO), are helping utilities reduce energy consumption and improve grid efficiency. These methods lower voltage levels and manage reactive power flow to cut energy usage without compromising reliability. For every 1% voltage reduction, CVR can save 0.4% to 0.7% in energy use, while VVO systems deliver up to 4.7% savings in distribution networks.

Recent advancements, such as smart inverters, real-time monitoring tools, and machine learning, are making these systems more precise and effective. Utilities like ComEd have already achieved significant results, saving over 750,000 MWh annually through optimized voltage control. However, measuring these savings remains complex, requiring advanced modeling and testing to account for variables like weather and usage patterns.

This article explores how voltage regulation works, its measurable benefits, and the technologies driving its progress.

Energy Savings from Voltage Regulation: CVR and VVO Performance Metrics

Voltage Regulation in Modern Distribution Systems | Energy In A Flash

Recent Study Results on Voltage Regulation

By March 2023, ComEd's voltage optimization program covered its vast 11,400-square-mile service area in northern Illinois, delivering over 750,000 MWh in annual energy savings. Spearheaded by Jason Pozen and Wen Fan, the program relies on a centralized software platform equipped with VVO algorithms. These algorithms manage transformer load tap changers and capacitor banks to maintain optimal voltage levels across the system. The results highlight both energy and demand savings.

Energy and Demand Savings Numbers

The program is on track to achieve annual savings of over 1,600 GWh by 2026 - enough energy to supply 142,828 households. These savings are based on actual reductions in energy usage throughout the distribution network, not just theoretical estimates.

What makes this program effective is its ability to control voltage levels at thousands of distribution points simultaneously. This coordinated effort ensures that reducing voltage directly lowers energy consumption without sacrificing service reliability or equipment performance.

How Voltage Reduction Affects Efficiency

The efficiency benefits of voltage reduction go beyond just energy savings. The relationship between voltage reduction and energy savings is more nuanced. For instance, a May 2024 NIST study examined 523 homes equipped with solar panels, batteries, and price-responsive heat pumps. The study found that flexible loads could shift demand by up to 6 MW - double the baseline peak load - while causing voltage fluctuations of 4 V (0.03 pu) in response to real-time price signals.

Factors like temperature, seasonal patterns, and customer usage habits also play a role in determining how much energy voltage reduction can save. As the IEEE PES Technical Report explains:

"This issue is complicated by the difficulty of distinguishing between changes in load and energy consumption caused by variations in temperature and customer usage patterns over time and those energy changes caused by the activity of regulating devices"

. To accurately assess performance, utilities must account for these variables, including temperature shifts, seasonal trends, and changes in customer behavior.

How Voltage Regulation Performance Is Measured

Measuring energy savings from voltage regulation isn't straightforward. According to the IEEE CVR M&V Standard Taskforce:

"One of the most significant challenges in CVR application is the measurement and verification (M&V) of its effects. Once CVR is applied, there is no benchmark load consumption measurement, which complicates assessing the CVR's real impact."

To address this, utilities estimate what energy usage would have been without voltage reduction, then compare it to actual consumption. This involves advanced techniques that consider variables like weather, user behavior, and seasonal trends. Accurate calculations and rigorous testing are essential for reliable results, as outlined below.

Energy Savings Calculation Methods

The CVR factor is the main metric for evaluating energy savings. It represents the ratio of the percentage change in power consumption to the percentage change in voltage. For instance, if a 1% voltage reduction leads to a 0.8% drop in energy usage, the CVR factor is 0.80.

Over time, calculation methods have shifted from basic linear regression models to more advanced machine learning approaches. For example:

- Studies on the Puerto Princesa Distribution System (2014–2018) and a UK supermarket (2012–2014) utilized multiple linear regression models. These models factored in variables like peak demand, customer count, humidity, and temperature, explaining up to 95% of demand variation.

- Modern techniques such as Gradient Boosting and Random Forest have achieved near-perfect predictive R² scores for voltage stability.

- Artificial Neural Networks (ANN) now estimate active power losses using substation data - like voltage, active power, and reactive power - while delivering lower RMS errors compared to traditional curve-fitting methods.

These advancements in measurement techniques allow utilities to fine-tune their voltage regulation programs, improving energy efficiency and savings.

Peak Demand Savings Measurement

Measuring peak demand savings requires precision similar to energy savings calculations. Utilities often use a day-on/day-off testing method during pilot phases, applying CVR every other day. This creates alternating "on" and "off" datasets for direct comparison. Such tests help establish a baseline for the CVR factor.

However, substation-level measurements can complicate results. These readings include system losses and the effects of capacitor banks, which may distort the data. While load-level CVR factors reflect the actual load characteristics, substation measurements also capture reactive power compensation from devices like capacitor banks. This can result in negative CVR factors for reactive power, as capacitor bank injections decrease and line losses potentially rise.

Utilities typically observe load reductions of 0.3% to 1% for every 1% voltage reduction during CVR tests. Achieving accurate results, however, hinges on the quality of the data collected.

sbb-itb-501186b

Technologies That Enable Voltage Regulation

Modern power systems rely on advanced technologies for voltage regulation, combining established tools like load-tap changers, auto transformers, and switched shunt capacitors with cutting-edge power electronics such as thyristors, smart inverters, SVCs, and STATCOMs. To make the most of these technologies, accurate, real-time data is crucial.

This is where tools like AMI, PMUs, and distribution automation come into play. These systems provide the precise, real-time monitoring needed to strike the balance between reducing losses and maintaining voltage standards.

Volt-VAR Optimization (VVO)

Volt-VAR Optimization (VVO) focuses on managing both voltage levels and reactive power across the distribution network. The goal? To cut energy consumption, reduce peak demand, and minimize system losses. These efforts lead to measurable efficiency improvements, as earlier studies have demonstrated.

How does VVO achieve this? It coordinates two key functions:

- Voltage Magnitude Adjustments: Using tap changers and regulators.

- Reactive Power Supply: Using capacitor banks to improve power factor and lower line losses.

Pacific Northwest National Laboratory highlights the efficiency benefits of reducing system voltage:

"Efficiency gains are primarily a result of reducing the system voltage. These efficiency gains result in less energy consumed by the end-use equipment served by the distribution system, while maintaining the same level of service to the customer."

Utilities often see energy savings of 1% to 4% after implementing VVO systems. For example, a February 2021 study by the Electric Power Research Institute (EPRI) and Sacramento Municipal Utility District (SMUD) examined 14 substations and reported energy consumption reductions of 0.4% to 0.7% for each percent of voltage reduction.

Modern VVO systems now integrate advanced Distribution Management Systems (DMS), enabling real-time modeling and coordinated control. Additionally, smart inverters are becoming essential for asynchronous generators. As David Shadle, Grid Optimization Editor at T&D World, explains:

"Smart inverters which are increasingly being required for asynchronous generators allow automatic synchronization to the grid, helping the generator match voltage, frequency, amplitude, and phase angle."

While VVO optimizes voltage and reactive power across the entire network, Conservation Voltage Reduction (CVR) focuses on a more targeted approach.

Conservation Voltage Reduction (CVR)

CVR is designed to intentionally lower voltage within acceptable limits to reduce energy consumption and peak demand. It achieves this by controlling voltage regulators, load-tap changers, and capacitors to maintain end-user voltages within required standards. Modern CVR systems often incorporate PV smart inverters to ensure voltage regulation remains stable.

Research by the National Renewable Energy Laboratory (NREL) has shown that combining Advanced Distribution Management Systems (ADMS) with Distributed Energy Resource Management Systems (DERMS) can lead to energy savings of up to 4.7% in real-world distribution systems.

One of the challenges with CVR is measuring its impact. Since activating CVR eliminates a direct baseline for comparison, utilities must use statistical or modeling methods to estimate what energy consumption would have been without the voltage reduction. To address this, the IEEE introduced the 3102-2024 standard in January 2025, providing a framework for utilities to calculate energy savings and CVR factors.

Program Performance and Market Results

Understanding why there's often a gap between projected and actual savings is key to making voltage regulation programs more effective. By refining measurement techniques and analyzing program performance, we can uncover discrepancies and work toward more reliable energy efficiency outcomes.

Projected vs. Measured Savings

The gap between projected and measured savings remains a challenge for voltage regulation programs. One of the biggest hurdles is separating the effects of voltage regulation from external influences like temperature changes or shifting customer energy usage patterns. To tackle this, many utilities turn to regression-based or comparison-based methods. A popular approach is the "day-on/day-off" method, where Conservation Voltage Reduction (CVR) is applied every other day to gather data that helps fine-tune predictive models.

| Method | Description | Application |

|---|---|---|

| Regression-based | Uses mathematical models to link energy use with factors like temperature and voltage. | Filters out external influences on savings. |

| Comparison-based | Compares energy use during "on" versus "off" periods. | Often paired with the "day-on/day-off" method for data. |

| Day-on/Day-off | Alternates voltage regulation daily to establish a baseline. | Commonly used for empirical CVR factor analysis. |

These methods form the backbone of efforts to improve program accuracy. Addressing these measurement challenges is the first step toward refining performance and achieving better results.

How to Improve Program Results

Improving program outcomes starts with standardization and better monitoring tools. The IEEE CVR M&V Standard Taskforce, launched in October 2020, developed guidelines to ensure consistent reporting of energy efficiency benefits, which directly supports better market performance.

Advanced technologies like smart meters, automation systems, and Phasor Measurement Units (PMUs) are making it easier to isolate CVR savings from baseline load variations. Additionally, the industry is moving away from traditional centralized control systems. Instead, there's a growing shift toward distributed, feedback-based optimization that adjusts to real-time grid conditions. This evolution promises to make voltage regulation programs more adaptive and accurate.

The Future of Voltage Regulation in Energy Management

The traditional methods of voltage control are no longer sufficient for today’s energy grid, which is increasingly shaped by distributed energy resources. The rise of rooftop solar panels, battery storage systems, and electric vehicle chargers is pushing utilities to rethink how they manage voltage across their distribution networks.

"Environmental and sustainability concerns have caused a recent surge in the penetration of distributed energy resources into the power grid. This may lead to voltage violations in the distribution systems making voltage regulation more relevant than ever." - Priyank Srivastava, et al.

To meet these challenges, utilities are shifting from centralized, offline control to distributed, real-time optimization. This new approach allows for instantaneous responses to changing grid conditions. Modern technologies like Model Predictive Control, machine learning, and smart inverters are driving this transformation. For example, smart inverters from solar systems can now provide dynamic voltage support - even at night - by using reactive power to stabilize the grid. Additionally, grid-edge optimization devices and STATCOMs are offering precise, localized voltage control that older technologies couldn’t achieve.

The integration of Advanced Distribution Management Systems (ADMS) and Distributed Energy Resource Management Systems (DERMS) has already demonstrated promising results. Real-world utility systems using these platforms have reported energy savings of up to 4.7%. By combining legacy equipment with advanced grid-edge devices, these systems ensure voltage stays within acceptable limits while maximizing efficiency.

However, as voltage regulation becomes more digitized, new challenges are emerging. Cybersecurity is now a critical concern and needs to be built directly into control frameworks. Leveraging digital twins and hardware-in-the-loop simulations will be essential for testing advanced Volt-Var controls before they are deployed. Additionally, closer coordination between Transmission System Operators (TSOs) and Distribution System Operators (DSOs) is becoming necessary to maintain voltage stability across interconnected systems.

For electrical contractors and facility managers, adopting these advanced systems is essential for improving energy efficiency. Platforms like Electrical Trader provide access to both new and used transformers, breakers, and other power distribution components, helping to support these vital voltage regulation upgrades.

FAQs

How do CVR and VVO technologies help save energy in power distribution systems?

Conservation Voltage Reduction (CVR) and Volt-Var Optimization (VVO) are powerful tools for improving energy efficiency in power distribution systems. These technologies work by fine-tuning voltage levels and enhancing the system's overall performance.

CVR focuses on lowering the voltage delivered to consumers, which leads to reduced energy consumption without compromising the quality of service. This is made possible by advanced systems that carefully monitor and manage voltage levels throughout the network.

On the other hand, VVO takes a dynamic approach, managing both voltage and reactive power in real-time. Using equipment like capacitor banks, voltage regulators, and load tap changers, VVO minimizes power losses and boosts system efficiency.

When combined, CVR and VVO provide utilities with effective tools to decrease energy usage, lower peak demand, and improve the grid's overall efficiency. These technologies play a key role in creating more sustainable and efficient energy systems.

What makes it challenging to measure energy savings from voltage regulation technologies?

Measuring energy savings from voltage regulation technologies, like Conservation Voltage Reduction (CVR), isn’t straightforward. One major hurdle is the absence of a clear baseline for energy consumption once CVR is implemented, which makes pinpointing its exact impact tricky. On top of that, external factors - like fluctuating weather conditions or changes in how consumers use energy - can muddy the waters even further.

To address these challenges, advanced tools like simulations and regression models are often used. However, these methods come with their own set of limitations. Even with modern sensing and communication technologies that enhance monitoring, ensuring consistent and reliable data can still be a complex task. All of these variables make it tough to accurately measure the energy savings directly tied to voltage regulation.

How do smart inverters and machine learning improve voltage regulation in power systems?

Smart inverters and machine learning are reshaping how voltage regulation works in power distribution systems. Smart inverters play a key role by managing both reactive and active power dynamically. This allows them to make quicker and more precise voltage adjustments compared to older technologies like tap-changing transformers. Whether operating independently or through remote commands, these inverters adapt to real-time grid conditions, ensuring voltage stability and boosting energy efficiency.

On the other hand, machine learning takes voltage regulation to the next level by analyzing massive datasets to predict voltage changes. By proactively adjusting inverter settings, these algorithms create adaptive control strategies that respond to fluctuating loads and generation patterns. This minimizes voltage issues and strengthens grid reliability. Together, smart inverters and machine learning pave the way for a more efficient and resilient power distribution network, which becomes increasingly important as renewable energy sources like solar and battery storage become more common.