AI in Smart Grids: Key Industry Trends

Share

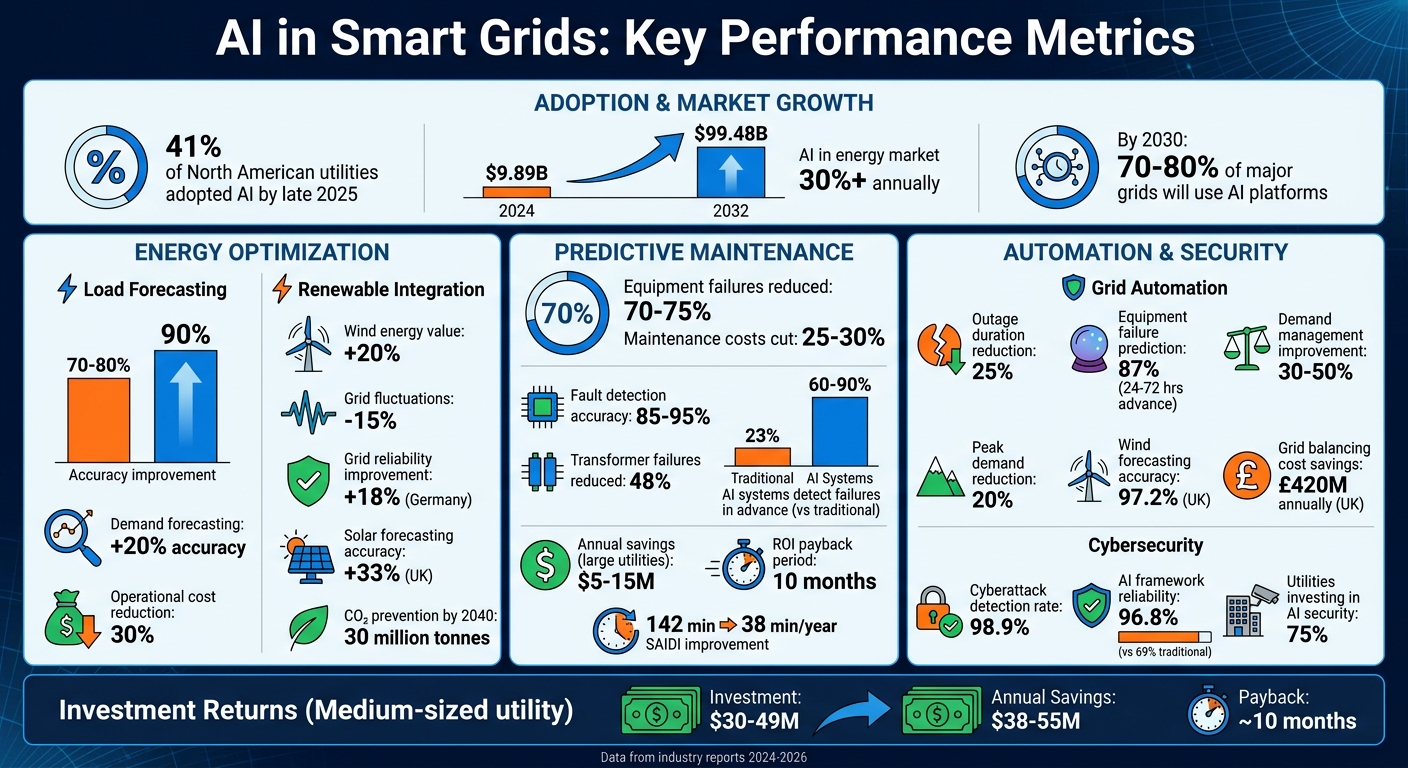

AI is transforming the U.S. power grid by addressing challenges like aging infrastructure, rising energy demands, and renewable energy integration. By late 2025, 41% of North American utilities adopted AI, improving grid operations significantly. Key benefits include:

- Energy Optimization: AI improves demand forecasting accuracy by up to 20% and boosts renewable energy integration.

- Predictive Maintenance: AI reduces equipment failures by 70–75% and cuts maintenance costs by 25–30%.

- Grid Automation: Self-healing grids powered by AI restore power in seconds, reducing outages and improving reliability.

- Cybersecurity: AI detects 98.9% of cyberattacks, safeguarding critical infrastructure.

AI-driven solutions improve efficiency, reduce costs, and support renewable energy goals, making grids smarter and more reliable.

AI Impact on Smart Grid Performance: Key Statistics and Benefits

AI in Power Systems || Smart Grid and Energy Optimization with Machine Learning

sbb-itb-501186b

AI for Energy Optimization

Modern energy grids face a tough balancing act: they must generate enough power to meet real-time demand while accommodating the unpredictable nature of renewable energy. Traditional forecasting methods often fall short, with errors ranging from 20% to 30% of total demand. This is where AI steps in, leveraging massive amounts of data from smart meters, IoT sensors, and weather feeds to predict energy needs with far greater precision.

Machine Learning for Load Forecasting

Machine learning has revolutionized load forecasting, pushing accuracy rates from 70–80% to an impressive 90%. Techniques like LSTM networks excel at capturing the complex, nonlinear relationships between weather conditions and energy demand.

A great example of this is Google DeepMind's work in Texas. In February 2026, they applied deep learning models to manage 700 MW of wind power. By forecasting wind output 36 hours in advance, they boosted wind energy's economic value by 20% and reduced grid fluctuations by 15%. Over in the UK, the National Grid Electricity System Operator teamed up with the Alan Turing Institute to refine solar power forecasting. Their machine learning system used 80 variables - such as cloud cover and atmospheric pressure - and improved accuracy by 33%.

Utilities that incorporate local weather data and continuously retrain their models see even better results. These dynamic approaches can improve accuracy by up to 20% compared to static algorithms. The payoff? Grid operators can lower reserve margins and cut operational costs by as much as 30% through smarter resource allocation. This precision makes it easier to integrate renewables while keeping the grid stable.

Optimizing Solar and Wind Energy Integration

Improved forecasting is just the start. AI also plays a crucial role in integrating renewable energy sources like solar and wind, which are inherently variable. By analyzing diverse data - such as satellite images, weather radar, market prices, and smart meter readings - AI predicts renewable output and optimizes energy storage systems to smooth out fluctuations.

AI-managed storage systems are a game-changer. In Germany, these systems improved grid reliability by 18% while handling over 243 TWh of renewable energy annually. Looking ahead, the European Union estimates that AI-optimized battery storage could reduce renewable energy curtailment by 45 TWh by 2040, preventing around 30 million tonnes of CO₂ emissions. A standout example is the Tesla Hornsdale Power Reserve in South Australia. This 129 MWh facility uses an AI-driven "Autobidder" system to autonomously adjust battery output, maintaining grid frequency and stabilizing the regional grid in real time.

"Data, digitalisation and risk management are key enablers to bring value and accelerate the decarbonisation of our power grids; in that context, a partnership with Google was obvious."

- Alexandre Cosquer, Executive Committee Member, ENGIE

AI's influence extends beyond storage. It's also driving innovations like Vehicle-to-Grid (V2G) systems. In Utrecht, Netherlands, AI-managed V2G systems connected electric buses and cars to the local grid, cutting energy consumption by 10% during peak hours. This transforms electric vehicles into active participants, feeding energy back into the grid when demand spikes.

Predictive Maintenance and Asset Management

AI is not just reshaping energy optimization; it's also transforming how we handle grid maintenance and asset management. Grid equipment often shows signs of potential failure weeks before a breakdown, but traditional methods may miss these early warnings. AI steps in by analyzing real-time data from IoT sensors, Phasor Measurement Units (PMUs), and Advanced Metering Infrastructure to catch problems before they escalate into costly outages.

This shift from reactive to predictive maintenance marks a major change in utility operations. Instead of waiting for equipment to fail or sticking to rigid maintenance schedules, AI identifies failure patterns and triggers maintenance only when necessary. This approach turns expensive emergency repairs - often 3–5 times more costly than planned work - into scheduled maintenance tasks.

Fault Detection and Anomaly Prediction

AI's ability to detect issues surpasses traditional systems and human operators. Machine learning models analyze signals like thermal creep, dissolved gas analysis (DGA) trends, and partial discharge - indicators that often go unnoticed until a major failure occurs. Tools like Graph Neural Networks (GNNs) verify grid topology in real time, while Convolutional Neural Networks (CNNs) analyze drone and satellite imagery to spot physical issues such as leaning poles or vegetation encroachment.

The accuracy of AI-based fault detection models ranges from 85% to 95%, with false alarms cut in half. While traditional grids detect only 23% of failures before they happen, AI-managed systems can identify 60–90% of issues days or even weeks in advance. For example, a U.S. utility implemented AI predictive maintenance across 10,000 transformers and 22,000 circuit breakers, reducing transformer failures by 48% and generating over $40 million in annual economic benefits within 15 months.

| Maintenance Type | Trigger Mechanism | Operational Impact |

|---|---|---|

| Reactive | Fixes after failure | High emergency costs; customer outages |

| Time-Based | Fixed calendar intervals | Replaces healthy assets; misses failures |

| Condition-Based | Sensor threshold breach | Better than time-based, still reactive |

| AI Predictive | Probability-based signs | Detects failures weeks ahead; planned fixes |

Digital twins amplify predictive maintenance by simulating grid assets under different conditions. For instance, the California ISO (CAISO) implemented an AI platform in partnership with Google Cloud and DeepMind, reaching full operational status in January 2024. Covering 80% of California's grid and serving 30 million people, it uses outage-risk scoring and contingency analysis to handle extreme heat events and reduce outages.

"Before predictive analytics, our transformer inspection program was calendar-driven... After deploying AI-based monitoring, we caught our first at-risk transformer four weeks before the model predicted failure... That one intervention paid for the system."

- Grid Asset Manager, Regional Distribution Utility

These capabilities not only prevent failures but also deliver considerable cost savings.

Cost Savings and Efficiency Gains

The financial benefits of AI-driven predictive maintenance are hard to ignore. Utilities adopting these systems reduce total maintenance costs by 25–30% and cut equipment breakdowns by 70–75%. These savings come from fewer emergency repairs, better crew scheduling, longer asset lifespans, and more effective capital planning.

AI also extends the operational life of assets. For example, transformers can safely run for 30 years instead of the typical 20, and avoiding a single transformer failure can save anywhere from $1 million to $7 million. Since insulation degradation causes 70% of high-voltage grid failures - a condition AI can detect weeks in advance - utilities can sidestep major losses.

By deploying technicians based on asset risk scores instead of fixed schedules, utilities improve efficiency. This targeted approach increases crew productivity by 25%, ensuring resources are allocated where they’re needed most. Large utilities can save $5–$15 million annually, while medium-sized ones often see a return on investment within 10 months, with yearly savings reaching up to $55 million .

Reliability also takes a big leap forward. AI-managed grids can slash the System Average Interruption Duration Index (SAIDI) from 142 minutes per year to just 38 minutes, drastically reducing customer outage durations. Between 2021 and 2023, Singapore's Energy Market Authority used IBM Watson to create a fully AI-managed grid, achieving world-class reliability by automating fault isolation and managing underwater cables.

Grid Automation and Real-Time Decision Making

AI is revolutionizing grid management by enabling fully automated systems that can detect, isolate, and restore service - often before disruptions are even noticed by customers. This shift from predictive maintenance to dynamic, proactive grid control marks a major operational leap for the utility sector.

Modern systems handle billions of data points every hour, sourced from PMUs, smart meters, and IoT sensors. These massive data streams feed machine learning models that make split-second decisions on critical tasks like power routing, voltage regulation, and load balancing. Building on earlier predictive maintenance advancements, this level of automation significantly improves grid resilience.

Self-Healing Grids

AI-powered self-healing grids are a game-changer. These systems can detect anomalies, pinpoint faults, isolate affected areas, and restore power using automated switchgear. Known as Fault Location, Isolation, and Service Restoration (FLISR), this process has slashed power restoration times from minutes - or even hours - to mere seconds.

For example, Duke Energy showcased these benefits in the Carolinas in 2025. After deploying AI within its FLISR systems, it reduced outage durations by 25%, improving SAIDI scores from 100 to 75 minutes per customer annually. Similarly, California ISO demonstrated the potential of advanced automation. In August 2025, its AI platform - developed with Google Cloud and DeepMind - identified a cascading failure 47 hours before it could occur. By rerouting power and tapping into battery reserves, the system prevented an economic loss of $340 million for 2.4 million households. AI has proven capable of predicting 87% of equipment failures 24 to 72 hours in advance, giving utilities the critical lead time needed to address issues proactively.

Real-Time Monitoring and Control

AI is also transforming how grids handle peak demand and integrate renewable energy. During high-demand periods, AI-driven load balancing ensures energy is distributed efficiently, improving demand management performance by 30–50%. Demand response programs, powered by AI, adjust smart devices like thermostats and EV chargers, reducing peak electricity demand by as much as 20%.

One standout example is the UK National Grid ESO, which implemented an AI platform on Microsoft Azure between 2023 and 2025 to tackle wind energy volatility. The system improved wind forecasting accuracy to 97.2%, cutting grid balancing costs by £420 million annually. By processing weather conditions, grid data, and market signals all at once, the platform optimizes when and where wind energy is fed into the grid.

Graph Neural Networks are another breakthrough, enabling the creation of dynamic digital twins of grid infrastructure. These models validate physical relationships and correct data inconsistencies in real time. AI operates on three levels: as an investigator uncovering insights, as an advisor assisting human operators, and as an autonomous operator managing voltage directly. This capability allows the grid to react to changes in milliseconds, preventing cascading failures that could lead to widespread outages.

"We are witnessing the death of the 'reactive grid' and the birth of the Autonomous Grid." - Energy Solutions

These advancements in real-time monitoring and control pave the way for future developments in decentralized energy systems and cybersecurity.

Decentralized Energy and Cybersecurity

Smart grids are evolving toward decentralized systems where AI plays a key role in managing distributed energy resources and addressing cyber threats. The global AI in energy market is booming, hitting about $9.89 billion in 2024, with projections suggesting it could soar to $99.48 billion by 2032 - a staggering annual growth rate of over 30%. This growth highlights the urgent need for utilities to tackle the complexities of modern grids. These advancements build on earlier progress in energy optimization and grid automation, ensuring decentralized systems are both efficient and secure.

Edge AI for Decentralized Grid Management

Edge AI processes data directly at substations and generation points, cutting out delays common in cloud-based systems. This speed is critical for tasks like Fault Detection, Isolation, and Restoration (FDIR), which require split-second decisions to prevent localized issues from spiraling into larger problems. By deploying neural networks on low-cost single-board computers or existing smart grid hardware, utilities can efficiently manage Distributed Energy Resources (DERs), Battery Energy Storage Systems (BESS), and EV charging stations at the source of energy production or consumption.

A great example is Sandia National Laboratories' collaboration with Public Service Company of New Mexico (PNM) at the Prosperity solar farm. Here, a neural-network AI running on single-board computers identified both physical and cyber issues. These hardware-based systems process algorithms 100–1,000 times faster than software-only alternatives. Shamina Hossain-McKenzie, a cybersecurity expert at Sandia, explained the benefits:

"Our technology will allow the operators to detect any issues faster so that they can mitigate them faster with AI".

Another key innovation is autonomous islanding, where grid segments can operate independently during communication failures. Industrial IoT edge gateways facilitate this by functioning under extreme conditions - handling temperatures from –40°F to 158°F - and using encrypted protocols like IPsec and WireGuard to secure operational technology. As Hubery Zhang, IoT Technical Support Director at Robustel, described:

"Edge Computing for Smart Grids is the bridge between today's legacy constraints and tomorrow's autonomous reality".

These edge solutions not only improve grid management but also strengthen cybersecurity measures.

AI for Cybersecurity

AI is also transforming grid defenses. The cybersecurity landscape for smart grids is becoming increasingly complex, with over one-third of utility companies reporting major cybersecurity incidents in the past year. In Europe, cyberattacks on the energy sector doubled over a recent two-year period. Every IoT device, inverter, and EV charger can potentially serve as an entry point for cyber-physical attacks.

AI is stepping up to meet these challenges. AI-enhanced smart grid systems have achieved a 98.9% detection rate for cyberattacks, while AI-based frameworks boast a reliability ratio of 96.8% - far surpassing the 69% achieved by traditional Deep Belief Networks. Nearly 75% of utilities are now investing in AI-driven cybersecurity solutions. Many of these systems use autoencoder neural networks that rely on unsupervised learning to understand normal grid behavior, flagging anomalies as potential threats without needing pre-labeled attack data.

One major hurdle is integrating cyber and physical data. High-frequency physical data, like voltage and current readings reported 60 times per second, must be combined with sporadic cyber network traffic. AI excels at spotting mismatches, such as when a substation reports "zero current" while surrounding nodes show active power flow. Graph Neural Networks further enhance security by creating dynamic models of grid topology, verifying physical relationships, and correcting data inconsistencies in real time to counter false data injection attacks.

Modern defense strategies operate on multiple levels - monitoring individual devices, sharing data within secured enclaves, and exchanging alerts globally among utility operators. As the Kyndryl Readiness Report emphasizes:

"The only way to stay ahead of AI-enabled attacks will be to fight back with AI".

This emerging AI-versus-AI dynamic is reshaping how utilities safeguard critical infrastructure, with advanced machine learning tools now being wielded by both defenders and attackers alike.

Conclusion

AI is reshaping smart grids, turning them into predictive systems that excel in energy management, maintenance, and automation. Compared to older methods, grids enhanced with AI show remarkable improvements. For instance, grid stability has leaped forward, with annual customer interruptions dropping from 142 minutes to just 38 minutes on average. Utilities using AI-driven predictive maintenance have also reported fewer emergency repairs, slashing maintenance costs by 25–30% and reducing equipment breakdowns by as much as 70–75%.

These advancements aren't just technical - they bring significant financial returns. For a medium-sized utility, an AI investment of $30–$49 million can lead to annual savings of $38–$55 million, with a payback period of around 10 months. This is achieved through fewer outages and longer equipment lifespans. As Piyush Mishra from Oracle points out:

"AI is not a cure-all, nor will it replace the deep expertise of grid operators. But as a force multiplier - delivering speed, scale, and precision - it could open new possibilities for utility leaders worldwide".

This transformation also creates exciting opportunities for professionals in the electrical and industrial sectors. The shift demands multidisciplinary teams, including software engineers to develop real-time control platforms, hardware engineers to design advanced sensors, and data scientists to analyze vast telemetry data. By late 2025, 41% of North American utilities are expected to have fully adopted AI, data analytics, and grid-edge intelligence. By 2030, projections suggest 70–80% of major grids will operate with AI platforms for forecasting and optimization.

To move forward, utilities can focus on key steps like conducting in-depth data audits to identify sensor gaps, running shadow mode pilots to test AI predictions, and investing in workforce training for machine learning, data science, and cybersecurity. Whether it's managing distributed energy resources, rolling out predictive maintenance, or deploying edge computing, the tools needed to create efficient and reliable grids are already within reach.

Electrical Trader is dedicated to supporting this evolution by providing top-tier electrical components essential for building smarter, more resilient grids.

FAQs

What data do utilities need to make AI forecasting work?

Utilities depend on real-time data gathered from grid sensors, IoT devices, weather reports, and economic trends. This constant flow of information allows AI to predict energy demand accurately and oversee grid operations efficiently.

How can a utility pilot AI without risking grid reliability?

Utilities can safely experiment with AI by adopting a careful, step-by-step approach within controlled "sandbox" environments. This means starting with non-critical tasks like handling regulatory paperwork or improving internal communications. By doing this, they can evaluate the tools without risking essential operations.

At the same time, utilities prioritize areas like forecasting, reliability, and load management. However, they steer clear of using AI for autonomous control systems. This cautious strategy ensures that operational risks are kept low and grid stability remains intact as AI is gradually introduced.

What new cybersecurity risks come with AI and more connected devices?

The use of AI and connected devices in smart grids brings undeniable advancements, but it also opens the door to serious cybersecurity threats. Systems powered by AI, such as those handling voltage regulation or fault isolation, are particularly susceptible to attacks like data poisoning or adversarial manipulation. These types of breaches could result in severe consequences, including power outages or even damage to critical equipment.

Alarmingly, over 80% of these systems lack adequate security measures, leaving them exposed to cyber-physical attacks. To address this, utility providers need to focus on implementing stronger security protocols and taking proactive steps to identify and counter threats before they escalate.